Naive Bayes is often not given enough credit, people when learning about ML often directly start using XgBoost or Random Forest models. While these models are good and will often achieve the task, we should also know about Naive Bayes, a Bayesian ML model, which was once used in production by tech giants like Google.

But before we deep dive into Naive Bayes, we’ve to learn about the Bayes theorem itself.

It may seem daunting, but at its core, the formula is very simple to understand, all it provides is a way to calculate the probability of A given B has already happened. It is equal to the probability of B given that A has already happened multiplied by the probability of A divided by the probability of B happening.

You might be daunted by mathematical jargon such as posterior and priors, but if you think in these simple terms then it is a very simple formula.

Let’s take an example, and suppose that we don’t know Bayes theorem.

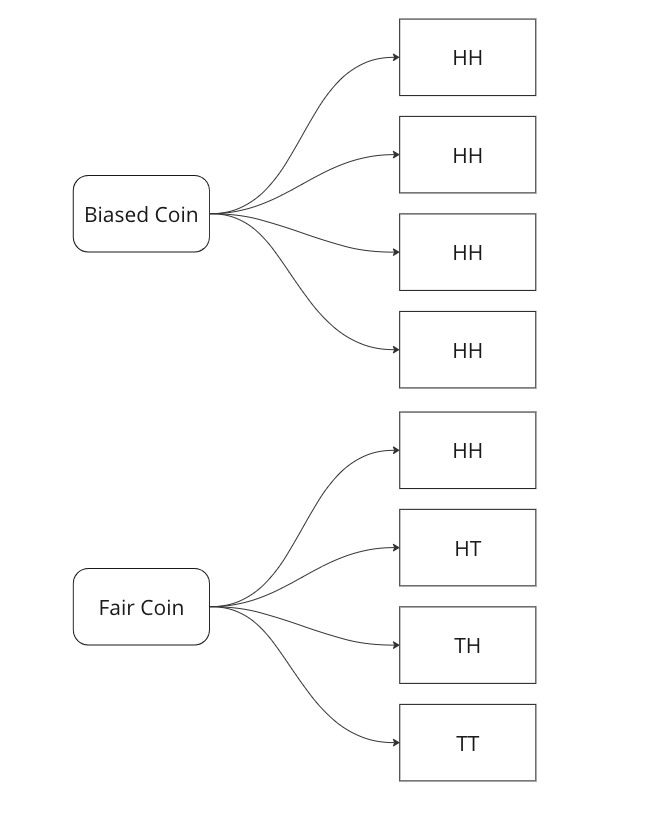

We are told that a coin could be fair, or biased (always comes up heads). We observe two heads in a row and we have to find the probability that the coin being tossed is a fair coin.

Graphing all outcomes of two coin tosses by both a fair and a biased coin. Now we know that two heads came in a row. So we update our sample space with this given information.

Here we can see that we can only attribute 1 sample out of 5 to a fair coin, so P(fair coin/HH) = 1/5. In a similar way, we can say P(biased coin/HH) = 4/5 as we can attribute 4 out of 5 sample points to the biased coin.

Let us see if we can arrive on the same answer by using the Bayes Formula.

Breaking down the calculations –

- P (HH/fair coin) = 1/4 – we saw above that in 1/4 cases a fair coin will give two heads

- P ( fair coin) = 1/2 – we know that a coin could be biased or fair, this is what is known as a prior, here it is equally likely that the coin could be biased or fair.

- P (HH) = 1/2*1 + 1/2*1/4 – This is where most of the confusion rises related to Bayes theorem. We have to calculate the probability of getting two heads, considering both scenarios. In the case of a biased coin, it will always gives head, so the probability is 1. There is also half a chance to select it, so we multiply it by 0.5. Similarly, we know 1/4 is the probability to get HH with a fair coin, and there is 0.5 probability to select it.

In the next part we will see how we can use this to create a very basic classifier in Python.